Gefei(Frank) Gu 顾格非

I am currently a master’s student at Carnegie Mellon University in the Language Technologies Institute, working with Prof. Daniel Fried and Prof. Sean Welleck on efficient reasoning for language models and agents. I previously completed my undergraduate studies in Statistics at Zhejiang University. I have worked as a research intern at Alibaba Group, focusing on large-scale reinforcement learning to enhance the agentic search capabilities of LLMs.

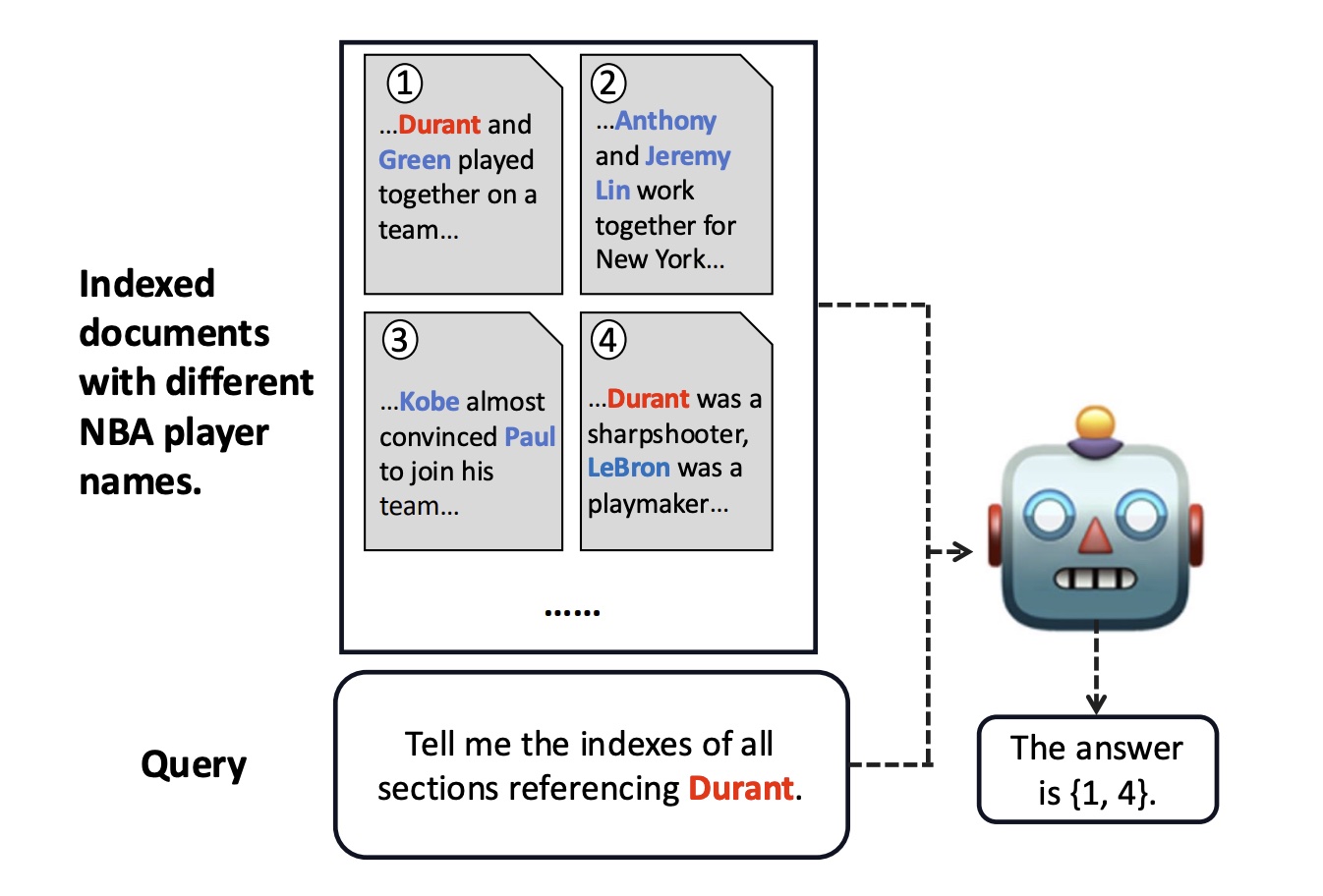

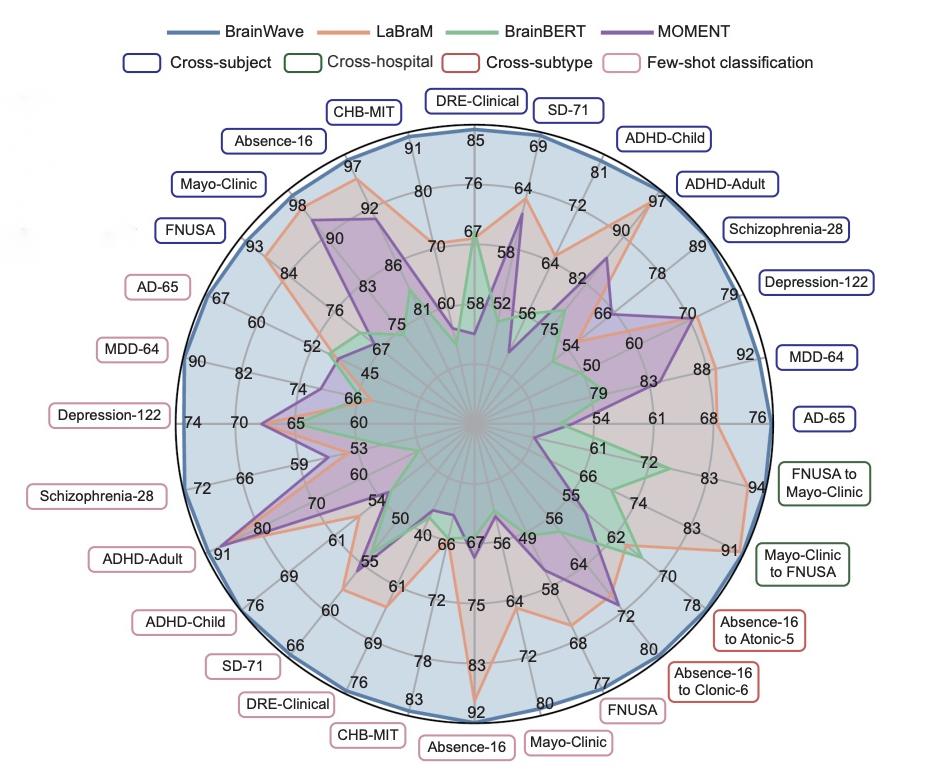

Previously, I have worked with Prof. Arman Cohan and the YaleNLP group on LLM evaluations and information retrieval. I have also worked with Prof. Yang Yang on AI4Science.

Honors and Awards

- Outstanding Student, Zhejiang University

- First Prize Scholarship, Zhejiang University (Top 3%)

News

| Aug 01, 2025 | Joining CMU as a master student! |

|---|---|

| Jul 15, 2025 | Serving as a reviewer for EMNLP Demo Track. |

| Jun 20, 2025 | One Paper (Product-Searcher) submitted to EMNLP Industry Track! |

| May 10, 2025 | One Paper (Ref-long) accepted by ACL main! |

| Mar 01, 2025 | Joining Alibaba Group as a Research Intern! |

Selected publications

- Industry Track

Product-Searcher: Real-World E-commerce Product DeepSearch via Reinforcement Learning2025Submitted to EMNLP Industry Track

Product-Searcher: Real-World E-commerce Product DeepSearch via Reinforcement Learning2025Submitted to EMNLP Industry Track